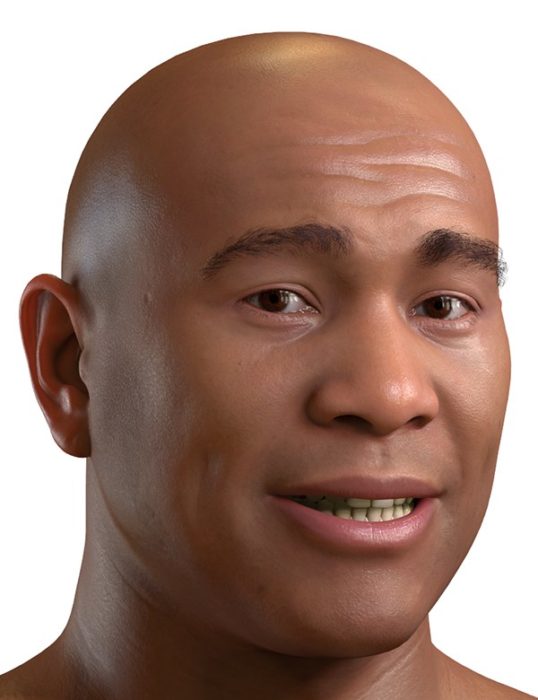

JALI’s automated lip sync and facial animation

software empowers digital storytellers to easily craft

naturally, richly expressive characters and avatars.

JALI Powered.

JALI offers production teams a powerful, flexible, and easy to use suite of tools to direct unforgettable digital performances with best-in-class automated lip sync, expressive multilingual facial animation, and high-performance rigging and pipeline solutions.

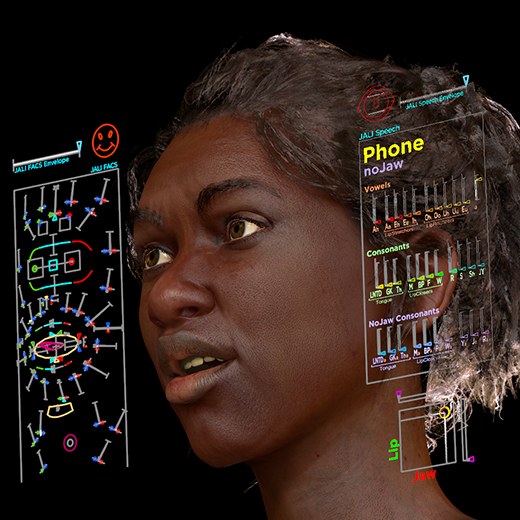

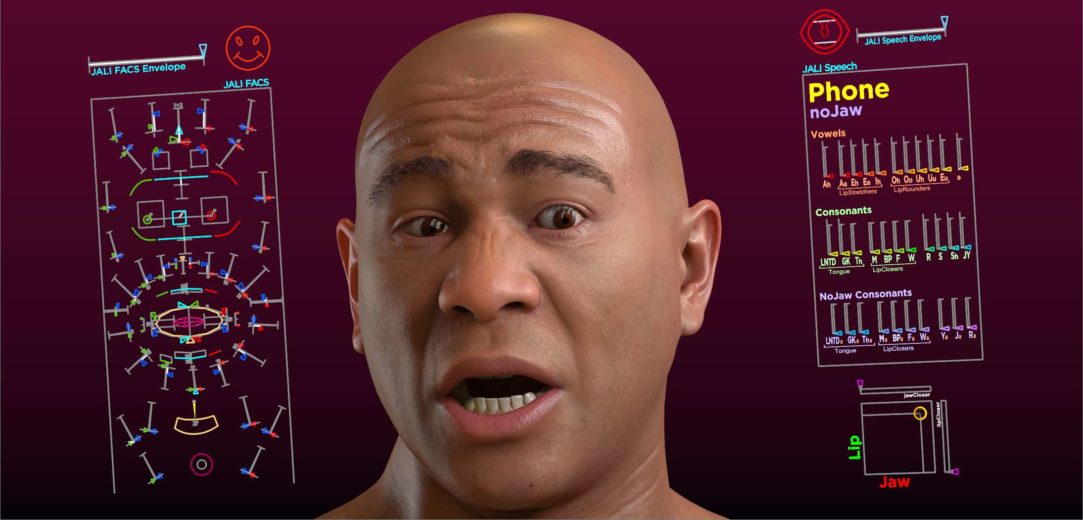

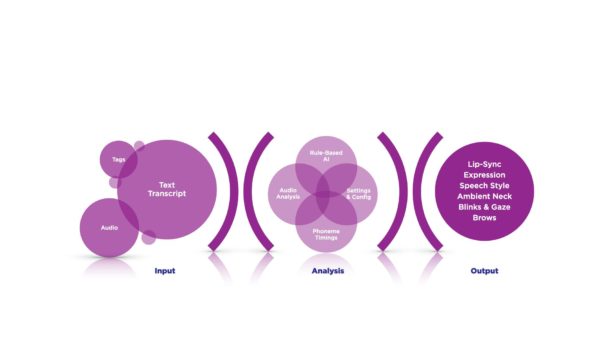

Automatic lip sync in seconds.

Generate language-specific expressive lip synchronization from audio and text (or TTS inputs), fully customizable speech styles and dynamic facial performances, and easy-to-edit animation curves.

Generate expressive full-facial animation.

Animate and direct full character performance with eye motion (blink, gaze, saccades), brows, custom expression settings, speech settings, character profiling, emotion tagging, asymmetry, and head and neck motion.

Quality at scale.

All of JALI’s workflow can be fully-automated through scripting. The software features a user-friendly interface for handling batch animation, rig connections, and exporting.

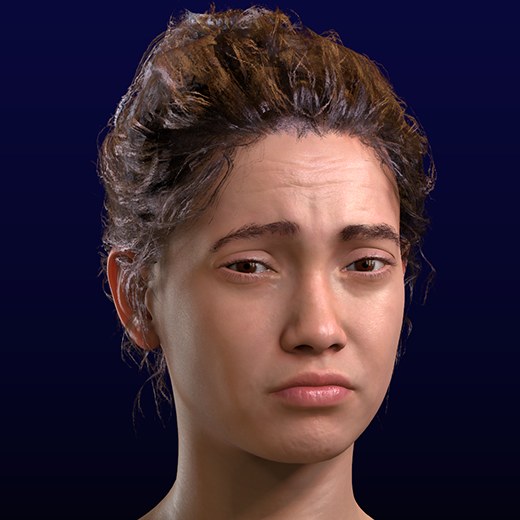

Animate digital actors for any performance.

Hero characters and NPCs, digital assistants, VR training, and interactive chatbots all need believable and compelling facial animation and lip sync. With JALI, you can direct the right performance for a given context: in production or in real-time when using text-to-speech.